CUSTOMER EXPERIENCE / TRAINING

How to Create a Customer Service

Training Program That Scales

Learn how to create a customer service training program that improves CSAT, reduces ramp time, and scales across bilingual BPO and nearshore teams.

TL;DR — Quick Takeaways

- Treat training as operational infrastructure, not a one-time onboarding task.

- Build a modular curriculum with a reusable core and client-specific modules.

- Use data from customers and QA to design targeted training programs.

- CallZent helps scale bilingual training systems for high-performance support teams.

Is your training program reducing risk and improving customer experience, or is it just a checklist item you run every time a new class starts?

Most companies approach training like a startup task. Build a deck. Run a few sessions. Let agents shadow. Hope quality improves. That approach breaks fast in a BPO environment, especially when you’re supporting multiple client brands, multiple industries, and two languages at once.

The fundamental difficulty in how to create a customer service training program isn’t building content. It’s building a system that stays usable when a healthcare client needs compliance-heavy scripts, an e-commerce brand needs returns expertise, and a finance account needs tighter verification discipline. Generic programs don’t hold up under that pressure.

Introduction Your Training Program as a Profit Center

A strong training program shouldn’t sit on the expense side of the conversation. It should show up in retention, quality, speed to proficiency, and customer loyalty.

That shift matters because customer service training has a business case. Research cited by SharpenCX on customer service training says companies can see a $3 return for every $1 invested in superior customer experience through training programs, and large companies can save as much as $60 million annually by improving manager training to reduce agent turnover.

A lot of leaders say they want excellent service, but they don’t define the behaviors, workflows, and escalation rules that produce it consistently. That’s where programs fall apart. If the only measure of training success is whether agents completed it, the business learns nothing.

For a BPO, the bar is higher. Training has to work across client accounts without forcing you to rebuild the whole program each time. It also has to reflect the actual conditions of live operations: queue pressure, difficult conversations, changing policies, QA standards, and bilingual communication.

Key takeaway: A customer service training program becomes a profit center when it shortens ramp time, improves service consistency, and gives managers a repeatable way to coach performance.

If your team is still defining service quality in vague terms, it helps to align training with a clear standard for excellent customer service. Without that, every trainer, supervisor, and client stakeholder will judge success differently.

Laying the Foundation with a Data-Driven Needs Assessment

The biggest mistake in training design happens before the first lesson is written. Teams assume they already know the problem.

They usually don’t.

Before building content, collect two kinds of evidence. First, what customers are struggling with. Second, where agents are struggling internally. Both matter. If you only look at customer complaints, you’ll miss process and skill issues. If you only look at internal QA, you’ll miss what customers care about.

Start with the customer side

Use customer-facing inputs first:

- Support tickets: Look for repeat reasons for contact, escalation triggers, and language-specific friction.

- Survey feedback: Review open-text comments, not just scores.

- Call and chat transcripts: These show where customers get confused, frustrated, or feel unheard.

- Drop-off patterns: In chat, email, or IVR flows, abandonment often points to unclear process design or poor agent guidance.

This matters financially. According to eLearning Industry’s write-up on customer service training KPIs, companies that invest in targeted customer service training informed by customer analytics can achieve 50% higher revenue and 55% higher share of wallet through emotionally engaging interventions.

That doesn’t mean every team should rush into empathy workshops first. It means the training should reflect what customers experience in reality. In practice, that often includes response expectations, clarity, ownership, and confidence.

Audit internal performance before writing modules

Customer data tells you where experience breaks. Internal audits tell you why.

Review:

- QA evaluations: Find recurring misses by behavior category, not just overall score.

- Supervisor observations: New agents often sound confident before they are accurate, or accurate before they are confident.

- Knowledge checks: Product gaps and policy confusion show up fast in scenario-based quizzes.

- Escalation logs: If one issue type always gets transferred, training likely isn’t preparing agents to resolve it.

A useful way to organize this discovery work is with an effective training plan template that separates business goals, learner gaps, and delivery decisions. That’s much better than collecting notes in scattered spreadsheets and trying to reverse-engineer a curriculum later.

Training should solve a verified operational problem. If you can’t name the problem clearly, you aren’t ready to build the lesson.

Turn findings into SMART goals

Training goals need operational language, not motivational language.

Weak goal: “Improve customer service skills.”

Strong goal: “Increase first contact resolution on billing contacts” or “reduce delayed responses on Spanish chat queues.”

The needs assessment should produce goals like these:

| Training area | Operational signal | Example training response |

|---|---|---|

| Product knowledge | Agents overuse escalation | Add guided troubleshooting drills |

| Soft skills | Customers report tone issues | Add empathy and active listening role-play |

| Process adherence | QA flags missed steps | Build step-by-step workflow practice |

| Bilingual consistency | English and Spanish interactions score differently | Separate language calibration and coaching |

When teams skip baseline measurement, they cannot determine whether the training worked or whether the operation became easier. That’s why performance visibility matters before launch. If you’re still tightening your reporting discipline, this guide to monitoring call center performance is a practical place to start.

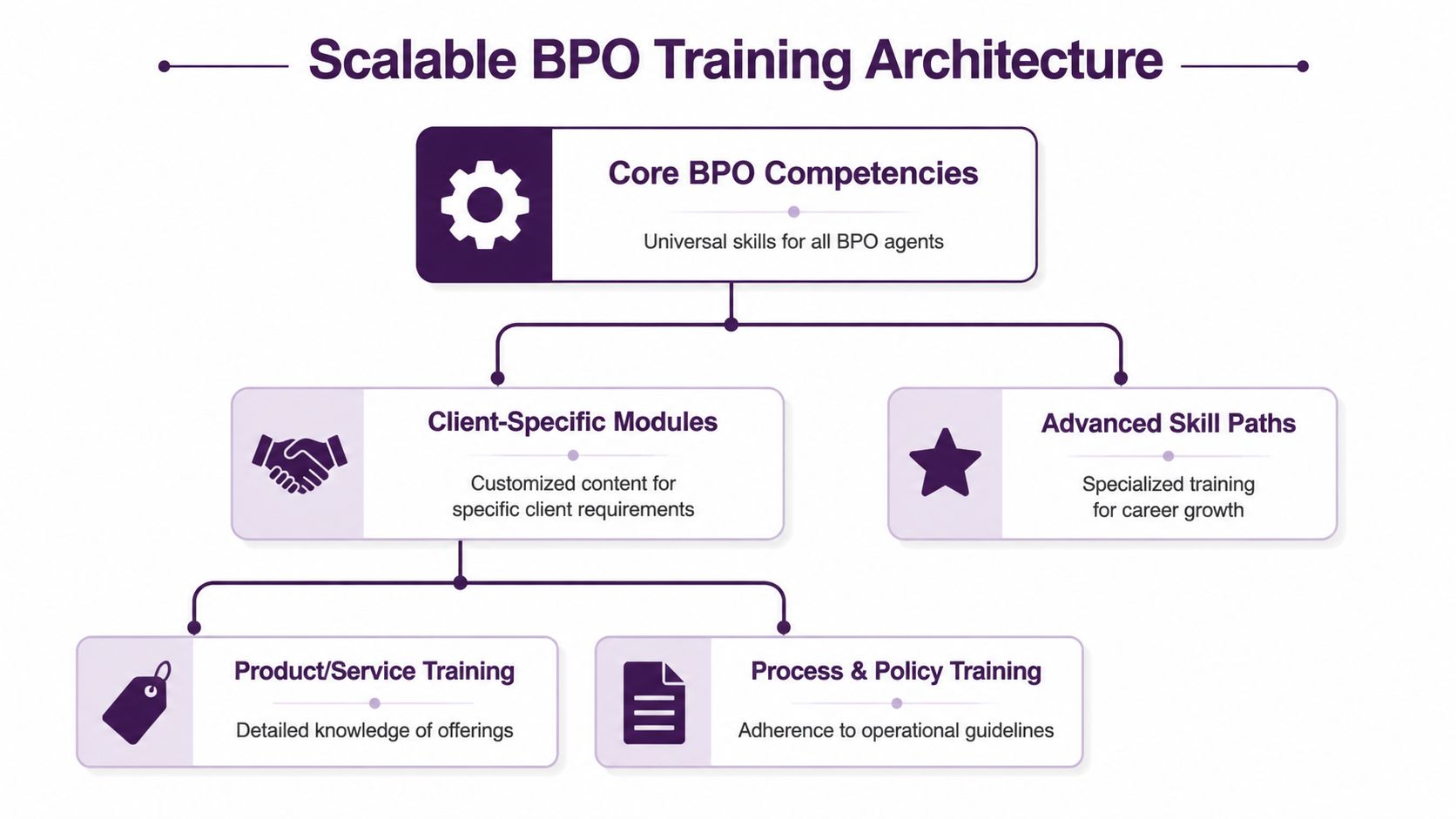

Designing a Scalable Curriculum for BPO Environments

How do you train for five clients, two languages, and three regulated workflows without rebuilding the program every time a new account goes live?

You start with architecture, not slides. In a nearshore BPO, training has to serve two goals at once: get agents productive fast, and keep the curriculum reusable across clients with very different rules, products, and customer expectations.

Build the core around transferable production skills

A scalable curriculum starts with a shared core that every agent completes before entering any client program. This is the layer that should survive account turnover, new launches, and cross-training.

The core usually covers:

-

Communication control

Opening the interaction, confirming intent, setting expectations, explaining next steps, and closing clearly. -

De-escalation

Slowing down tense calls, acknowledging frustration without overcommitting, and regaining call structure. -

System execution

Notes, tagging, disposition codes, CRM updates, and clean handoffs to the next queue or department. -

Compliance fundamentals

Verification, data handling, privacy rules, and account protection practices that apply across the client mix you support. -

Channel discipline

Voice, chat, email, and SMS each require different pacing, formatting, and response standards. -

Bilingual service consistency

How to deliver the same policy, tone, and outcome in English and Spanish, even when the phrasing cannot be translated word for word.

That last point gets missed in generic training guides. In bilingual operations, consistency is not just a language issue. It is a risk issue. If an English interaction is concise and compliant, but the Spanish version sounds vague or skips a required disclosure, the problem is operational, not linguistic.

Separate what stays constant from what changes by client

Once the core is stable, build client training as modular add-ons. Many BPO teams waste time here by recreating full onboarding tracks for each account, then spending the next six months updating the same soft-skills content in four different places.

A better approach is simple. Keep universal behaviors in the core. Put account-specific material into modules that can be swapped in by client, industry, or queue type.

Those modules usually include:

- brand voice and approved phrasing

- product or service knowledge

- workflows and escalation rules

- systems specific to the account

- industry regulations

- client exceptions that override the standard process

For example, a healthcare module should train agents on verification sequence, appointment logic, sensitive language, and documentation standards. A finance module should focus on authentication, disclosure wording, fraud flags, and strict process adherence. Both programs still rely on the same core skills: call control, documentation, empathy, and bilingual clarity.

This structure also makes staffing more flexible. If volume shifts from retail to insurance, you are not retraining from zero. You are adding the right module on top of a foundation the agent already has.

Practical rule: If the skill applies across accounts, keep it in the core. If it changes by client, system, product, or regulation, build it as a module.

Design for reuse at the lesson level

Modularity fails when lessons are written like one-off launch decks. Every module should follow the same internal format so trainers, QA leads, and operations managers can update it without rewriting everything around it.

Use a lesson structure like this:

-

Objective

The task the agent must perform in production. -

Business context

Why the step matters to the customer, the client, and the KPI tied to the interaction. -

Required process

The exact workflow, decision points, and system actions. -

Language standards

Approved phrasing, prohibited phrasing, and equivalent versions in English and Spanish where needed. -

Scenario practice

Realistic calls, chats, or tickets with common failure points. -

Validation

Quiz, observed simulation, nesting checklist, or QA-style evaluation.

This format keeps version control cleaner. It also makes customization faster when a new client enters a familiar vertical. If you already support one healthcare account, a second healthcare launch should require net-new content only where the workflow, systems, or compliance rules differ.

Match training time to production reality

Formal instruction has a place, but it should not carry the whole program. Agents learn judgment by applying the material in situations that feel like live work.

A practical mix in BPO training looks like this:

| Learning mode | What it looks like in practice | Where it works best |

|---|---|---|

| Hands-on practice | Simulations, mock calls, CRM tasks, workflow drills | Systems use, call control, escalation handling |

| Coached learning | Calibration sessions, peer reviews, supervisor feedback | Tone, language consistency, gray-area decisions |

| Formal instruction | Trainer-led sessions, SOP review, quizzes, process walkthroughs | Compliance basics, product updates, policy changes |

Operations teams often overuse the formal layer because it is easier to schedule and document. It is also where retention drops fastest. If a lesson covers a difficult workflow, bilingual objection handling, or regulated disclosures, agents need guided repetition before they touch the queue.

Quality should be built into that practice, not checked after the fact. Training modules should use the same evaluation logic your supervisors and analysts apply in production. These call center quality assurance best practices are useful as a reference point when you map training exercises to scorecards and coaching standards.

Build bilingual modules for equivalence, not translation

Nearshore teams need a curriculum that treats language as part of operations design. English and Spanish content should produce the same customer outcome, even when sentence structure, tone, and cultural expectations differ.

That means bilingual modules should include:

- side-by-side approved phrasing for required steps

- examples of literal translations that create confusion

- tone calibration for high-friction conversations

- role-play by language, not just by topic

- QA rubrics that score clarity and compliance in both languages

I have seen strong English programs fail in Spanish queues because the team translated scripts instead of adapting them. The result was predictable: longer handle time, lower confidence, and uneven QA scores. A modular curriculum prevents that by treating language adaptation as part of the lesson design, not an afterthought.

At this stage, platforms that support onboarding portals and structured learning paths can help centralize version control. For example, CallZent offers onboarding workflows and interactive learning paths for agent training, which is useful when multiple client updates need to be managed in one operating environment.

Choosing Delivery Methods for Bilingual Nearshore Teams

Delivery method shapes retention more than is often acknowledged. A solid curriculum can still fail if it reaches agents in the wrong format.

In a bilingual nearshore operation, the question isn’t whether live or self-paced training is better. The question is which parts need real-time coaching, which parts need standardization, and where language nuance can break consistency.

Live instruction works best for nuance

Instructor-led training is still the fastest way to correct tone, pacing, hesitation, and cultural mismatch.

For bilingual teams, live sessions are especially useful for:

- role-playing difficult calls in English and Spanish

- practicing explanation clarity for non-native phrasing

- calibrating soft skills across trainers and supervisors

- handling sensitive interactions, such as claims, billing disputes, or care coordination

A scripted sentence can be technically correct and still sound cold, overly direct, or vague to the customer. Live coaching catches that.

E-learning works best for consistency

Self-paced modules work well when the priority is standardization.

Use them for:

- product updates

- policy changes

- CRM navigation

- account verification steps

- knowledge checks and refreshers

If a client changes return policy language or introduces a new escalation path, an e-learning module is cleaner than re-running classroom training for every team. It’s also easier to version and audit.

The trade-off is obvious. E-learning doesn’t reliably fix judgment, tone, or emotional control. It teaches facts. It doesn’t reliably build conversational skill by itself.

Blended delivery is usually the right answer

For most nearshore BPO teams, blended delivery wins because it respects both speed and quality.

A practical model looks like this:

| Delivery method | Best use | Main limitation |

|---|---|---|

| Live ILT | Coaching nuance, role-play, calibration | Harder to scale quickly |

| E-learning | Standardized knowledge, updates, quizzes | Weak for behavior change |

| Blended | Faster rollout plus live reinforcement | Requires tighter coordination |

The bilingual piece matters here. A lot of companies think bilingual support means translation. It doesn’t. It means agents need to understand audience expectations, formality levels, conversational pacing, and when directness helps or hurts. That becomes more manageable when training content is paired with live practice and language-specific feedback.

If your operation handles North American customers across channels, building around bilingual support standards helps managers coach beyond fluency and toward customer confidence.

In bilingual service, “correct” language isn’t enough. Customers judge clarity, tone, and trust in the first few seconds.

Implementing Your Program and Onboarding with Precision

A training plan fails in rollout long before anyone calls the content bad.

Most of the damage comes from poor sequencing. Teams mix orientation with onboarding, launch too much content at once, skip pilot testing, or push agents into production before supervisors are ready to coach the new standard.

Pilot before full rollout

Run the program with a small group first.

That pilot should test:

- whether lessons match live interaction difficulty

- whether system steps are current

- whether quizzes are measuring useful knowledge

- whether supervisors can coach from the same standards trainers use

- whether English and Spanish materials are aligned in meaning, not just wording

A pilot also flushes out operational issues that don’t show up in a slide review. Broken links, outdated SOPs, missing call examples, and contradictory client guidance are common.

Separate orientation from onboarding

A lot of leaders still use those terms like they mean the same thing. They don’t.

Orientation introduces the company, tools, policies, and expectations. Onboarding builds job readiness over time through practice, coaching, and measured performance. This explanation of distinguishing orientation from onboarding is useful because it mirrors what operations teams see every day: one is an introduction, the other is a performance ramp.

A practical first 90 days

A solid onboarding path usually follows this rhythm:

-

Early stage

Core skills, systems access, process walkthroughs, and observed simulations. -

Middle stage

Client modules, supervised practice, side-by-sides, and controlled queue exposure. -

Later stage

Nesting, QA review, targeted coaching, and full production readiness.

That sequence matters because agents need progressive exposure. Throwing them into full-volume queues too early creates bad habits that are hard to coach out later.

Good onboarding doesn’t just transfer knowledge. It controls risk while agents build judgment.

A simple launch checklist helps:

- Content locked: Current SOPs, scripts, and examples approved

- Systems ready: Logins, CRM access, telephony, and test environments working

- Coach readiness: Supervisors briefed on scoring and support expectations

- Client alignment: Stakeholders agree on ramp standards and escalation rules

- Feedback loop: Pilot notes and first-wave agent feedback captured

If you’re refining the broader experience around activation and early retention, these customer onboarding best practices connect well to service training because both depend on a smooth start and clear expectations.

Measuring Training ROI and Driving Continuous Improvement

Training starts proving its value after launch, not at graduation.

In a BPO setting, especially one supporting multiple clients, languages, and compliance standards, ROI only becomes visible when training data connects to production data. Without that link, teams argue from anecdotes. Operations says the class was fine. QA says agents are missing steps. The client hears inconsistent calls and wants retraining. None of that helps unless the root cause is clear.

Track the right KPIs first

TechClass, in its guide on creating an effective customer support training program, points to CSAT, FRT, and FCR as core training-related KPIs. Those are a sound starting point because each one reflects a different performance layer.

- CSAT shows how the interaction felt to the customer. Tone, clarity, ownership, and expectation setting usually affect it.

- FRT shows how quickly the team responds. Training can influence workflow discipline, but staffing and routing often carry more weight.

- FCR shows whether agents can solve the issue without repeat contact. Knowledge depth, system fluency, and policy judgment tend to sit behind it.

For nearshore teams, segment those KPIs further. Review them by language, client, queue type, and agent tenure. A blended average can hide the underlying issue. I have seen English performance hold steady while Spanish FCR slips after a policy update because the translated examples were too literal and the supervisor calibration lagged by a week.

Diagnose the training gap, not just the metric

A KPI drop does not automatically mean the class failed.

Use patterns:

| KPI movement | Likely issue | Training response |

|---|---|---|

| CSAT drops, FCR stable | Agents resolve issues but sound rushed or rigid | Soft skills coaching, empathy drills, call reviews |

| FCR drops after a product change | Knowledge or workflow confusion | Update product module, run scenario refreshers |

| FRT worsens with good QA | Queue process issue more than training issue | Review staffing, workflow, and routing |

| QA compliance drops in one language | Translation or calibration gap | Rework bilingual examples and supervisor coaching |

This matters even more in a modular BPO program built for multiple industries. A healthcare client may require exact disclosure language and tighter documentation habits. A finance client may care more about verification accuracy, escalation control, and audit consistency. If one client line underperforms, resist the urge to retrain the whole floor. Fix the module, the scenario set, or the coaching guide tied to that workflow.

Build a coaching loop that agents can use

Post-launch coaching needs to be specific enough to change behavior on the next contact.

Supervisors should avoid comments such as “be more confident” or “show more empathy.” Agents improve faster when feedback points to the exact moment, the missed behavior, and the replacement language to use next time. These principles of constructive feedback align with what works in live operations. Clear, timely feedback is easier to coach, score, and repeat across teams.

A practical continuous-improvement loop looks like this:

- Review results weekly: Look at CSAT, FRT, FCR, QA, and customer comments together

- Find patterns by contact type: Billing, returns, claims, technical issues, renewals

- Update the right module: Don’t rewrite the whole program if one lesson is broken

- Coach managers too: Training quality often depends on supervisor consistency

- Recalibrate often: Especially across English and Spanish teams

🚀 Build a High-Performance Support Team

Partner with CallZent to design scalable customer service training programs that improve quality, reduce ramp time, and drive better customer outcomes.

Schedule a CallThe strongest programs stay modular on purpose. Core service behaviors remain stable across clients. Industry modules, compliance language, and scenario libraries change as needed. That structure lets nearshore teams customize fast without rebuilding training from scratch every time a new account launches or an existing client changes process.

If you need to build that kind of model, CallZent supports multilingual customer service operations with modular training design, bilingual workflows, and QA-led coaching systems built for real BPO conditions.